|

Insert into ORDERS ( ORDER_DATE, AMOUNT, USER_ID, DESCRIPTION) This generated 10000 users in the USERS table with random names. +-+-ġ | iooifgiicuiejiaeduciuccuiogib | iooifgiicuiejiaeduciuccuiogibĢ | iooifgiicuiejiaeduciuccuiogib | dgdfeeiejcohfhjgcoigiedeaubjbgģ | dgdfeeiejcohfhjgcoigiedeaubjbg | iooifgiicuiejiaeduciuccuiogibĤ | ueuedijchudifefoedbuojuoaudec | iooifgiicuiejiaeduciuccuiogibĥ | iooifgiicuiejiaeduciuccuiogib | ueuedijchudifefoedbuojuoaudecĦ | dgdfeeiejcohfhjgcoigiedeaubjbg | dgdfeeiejcohfhjgcoigiedeaubjbgħ | iooifgiicuiejiaeduciuccuiogib | jbjubjcidcgugubecfeejidhoigdobĨ | jbjubjcidcgugubecfeejidhoigdob | iooifgiicuiejiaeduciuccuiogibĩ | ueuedijchudifefoedbuojuoaudec | dgdfeeiejcohfhjgcoigiedeaubjbgġ0 | dgdfeeiejcohfhjgcoigiedeaubjbg | ueuedijchudifefoedbuojuoaudec Select * from USERS order by user_id fetch first 10 rows only Insert into USERS (FIRST_NAME, LAST_NAME) The query would be much faster with clustered data, and each RDBMS has some ways to achieve this, but I want to show the cost of joining two tables here without specific optimisation.

This means that ORDERS from one USER are scattered throughout the table. USERS probably come without specific order. It is important to build the table as they would be in real life. Both with an auto-generated primary key for simplicity.

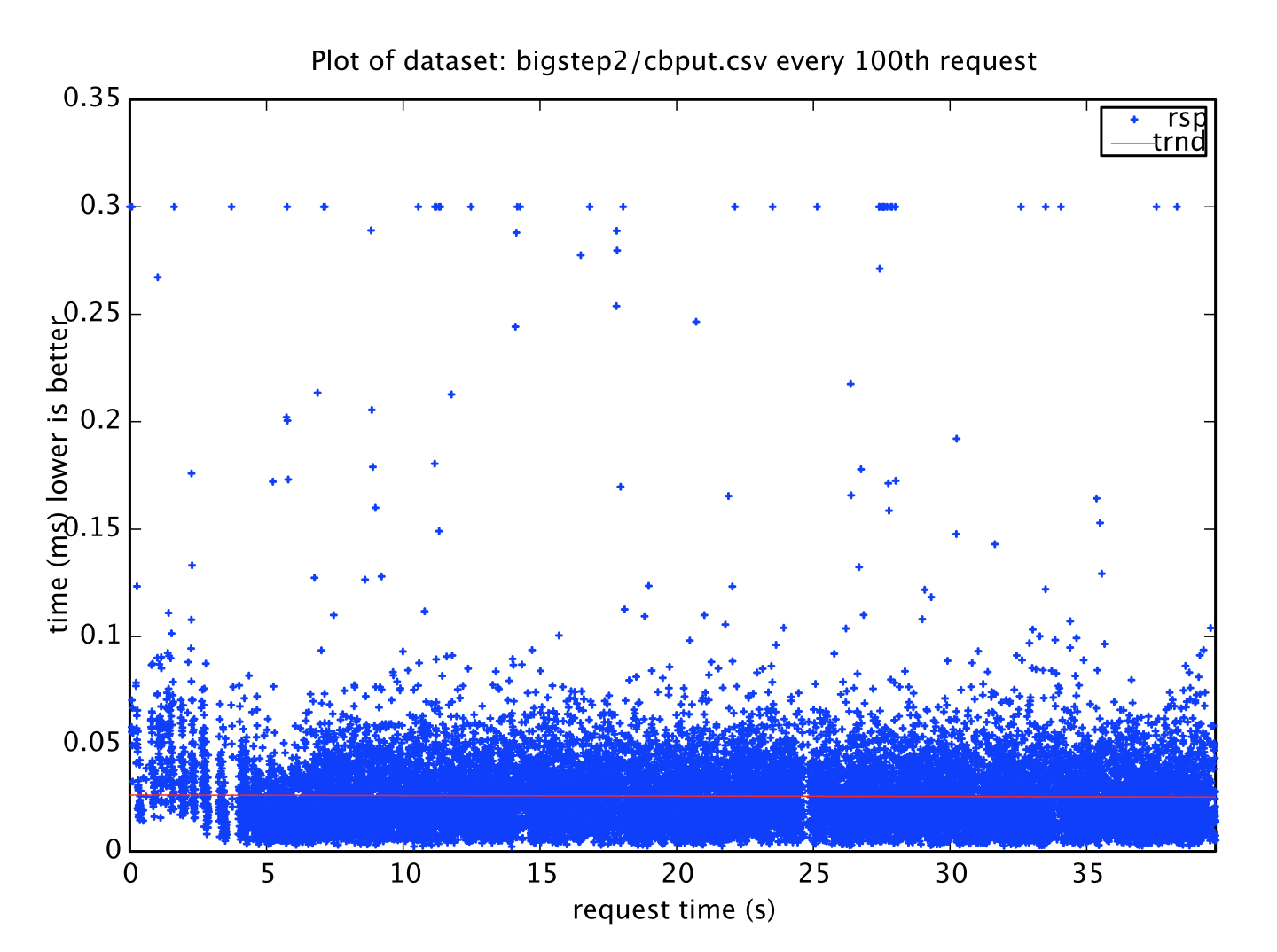

I have created the two tables that I’ll join. But let’s start with very simple tables without specific optimization.Ĭreate table USERS ( USER_ID bigserial primary key, FIRST_NAME text, LAST_NAME text)Ĭreate table ORDERS ( ORDER_ID bigserial primary key, ORDER_DATE timestamp, AMOUNT numeric(10,2), USER_ID bigint, DESCRIPTION text) And then maybe investigate further the features for big tables (partitioning, parallel query,…). You can run the same with larger tables if you want to see how it scales. Because when reading the execution plan, I don’t need large tables to estimate the cost and the response-time scalability. You will multiply your tables by several orders of magnitude before the number of rows impact the response time by a 1/10s of millisecond. Join and Group By here do not depend on the size of the table at all but only the rows selected.Performance is not a black box: all RDBMS have an EXPLAIN command that display exactly the algorithm used (even CPU and memory access) and you can estimate the cost easily from it.I will build those tables, in PostgreSQL here, because that’s my preferred Open Source RDBMS, and show that: It says that “As the size of your tables grow, these operations will get slower and slower.” There are a ton of factors that impact how quickly your queries will return.” with an example on a query like: “SELECT * FROM orders JOIN users ON … WHERE user.id = … GROUP BY … LIMIT 20”. The article claims that “there’s one big problem with relational databases: performance is like a black box. That’s why relational database are the king of OTLP applications.

This access method is actually not dependent at all on the size of the tables. And when we compare to a key-value store we are obviously retrieving few rows and the join method will be a Nested Loop with Index Range Scan. But all relational databases come with B*Tree indexes. That would be right with non-partitioned table full scan. But this supposes that the cost of a join depends on the size of the tables involved (represented by N and M). This makes reference to “time complexity” and “Big O” notation. And the time complexity of joins is “( O (M + N) ) or worse” according to Alex DeBrie article or “ O(log(N))+Nlog(M)” according to Rick Houlihan slide. And that it is a CPU intensive operation. The idea in this article, taken from the popular Rick Houlihan talk, is that, by joining tables, you read more data. You should choose a solution for the features it offers (the problem it solves), and not because you ignore what you current platform is capable of.

There are many use cases where NoSQL is a correct alternative, but moving from a relational database system to a key-value store because you heard that “joins don’t scale” is probably not a good reason. I’m challenging some widespread assertions and better do it based on good quality content and product. And because AWS DynamoDB is probably the strongest NoSQL database today. Actually, I’m taking this article as reference because the author, in his website and book, has really good points about data modeling in NoSQL. But I want to make clear that my post here is not against this article, but against a very common myth that even precedes NoSQL databases. I’ll reference Alex DeBrie article “ SQL, NoSQL, and Scale: How DynamoDB scales where relational databases don’t“, especially the paragraph about “Why relational databases don’t scale”.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed